...

| Table of Contents | ||

|---|---|---|

|

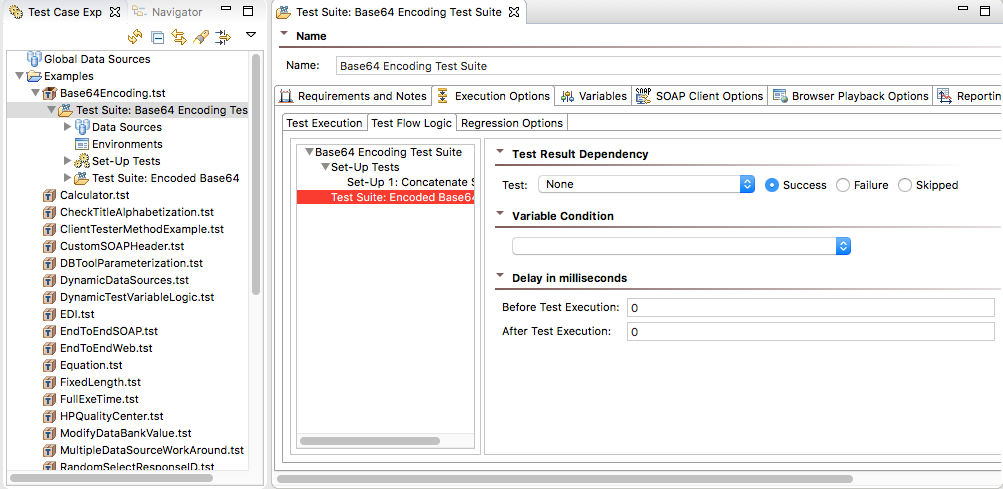

Accessing the Test Suite Configuration Panel

Double click a test suite in the Test Case Explorer to access and modify its properties.

Associating Comments, Requirements, Tasks, Defects, and Feature Requests with Tests

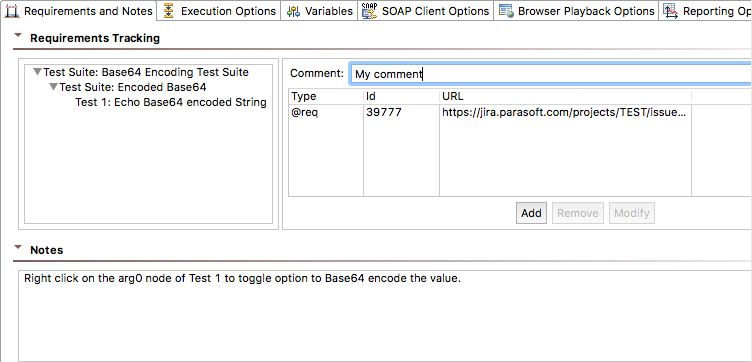

The Requirements and Notes tab of the test suite configuration panel allows you to associate requirements, defects, tasks, feature requests, and comments with a particular test in the test suite.

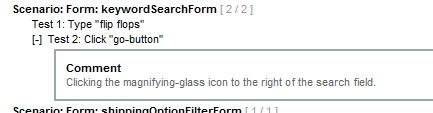

The HTML report will indicate the artifacts that are associated with each referenced test. For example, here is a report that references a test with an associated comment:

The requirements you define will appear in Structure reports and in a connected DTP system (if applicable). This helps managers and reviewers determine whether the specified test requirements were accomplished. For more information on Structure Reports, see Creating a Report of the Test Suite Structure.

Adding Associations and Comments

- Double-click the test suite node in the Test Case Explorer and click the Requirements and Notes tab.

- Choose the scope that the association and/or comments apply to in the Requirements Tracking section. You can apply this information to test suites, nested test suites, and tests.

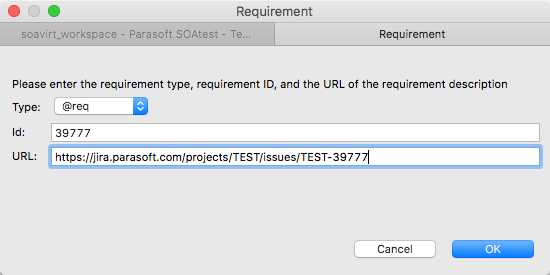

- Click Add button and choose a tag from the Type drop-down menu. DTP will use this information to associate the test suite’s test cases to the specified element type. You can create custom tags as described in Indicating Code and Test Correlations. Default tags are:

- @pr: for defects.

- @fr: for feature requests.

- @req: for requirements.

@task: for tasks.

Info title Configuring Custom Defect/Issue Tracking Tags You can configure defect/issue tracking tags to match the language that your organization uses to refer to defects or to feature requests. For details, see Indicating Code and Test Correlations.

- Enter an ID and a URL for the requirement and click OK.

If you enable the Preferences> Reports> Report contents option for Requirement/defect details, associations specified here will be shown in the HTML report. If a URL is specified, the HTML report will include hyperlinks. - To add a comment (e.g., "this test partially tests the requirement" or "this test fully tests the requirement"), enter it into the Comment field. Comments specified here will be visible in HTML reports.

- To add more detailed notes for the test suite, enter them into the Notes field.

Specifying Execution Options (Test Flow Logic, Regression Options, etc.)

| Anchor | ||||

|---|---|---|---|---|

|

You can configure how tests in the suite execute, including whether:

...

These options are configured in the Execution Options tab, which has three sub-tabs: Test Execution, Test Flow Logic, and Regression Options.

Test Execution

| Anchor | ||||

|---|---|---|---|---|

|

You can customize the following options in the Test Execution sub-tab of the Execution Options.

Execution Mode

Enable the Tests run sequentially option to run each test and child test suite of this test suite separately one at a time.

Enable the Tests run concurrently option to run all tests and child test suites of this test suite at the same time. Tests will run simultaneously.

Test Relationship

These options determine how SOAtest iterates through the rows of your data sources.

...

Enable the Tests run as group option (default for scenarios) to runs all tests for each row of the data source. In this case, a data source row is chosen, and each test and child test suite is executed for that row. Once all children have executed, a new row is chosen and the process repeats. The Abort scenario on option becomes active when Tests run as group is enabled (see details about the Abort scenario on option).

Enable the Tests run all sub-groups as part of this group option to run tests contained in this test suite as direct children of this test suite. SOAtest will then iterate through them as a group. The Abort scenario on option becomes active when Tests run as group is enabled (see details about the Abort scenario on option).

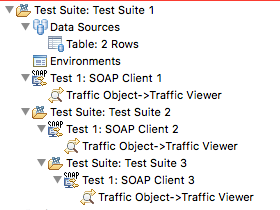

For example, consider the arrangement in the following test suite:

...

| Anchor | ||||

|---|---|---|---|---|

|

Advanced Options

Choose an option from the Multiple data source iteration drop-down menu to determine how SOAtest will iterate when multiple data sources are used within a single test suite whose tests are NOT individually runnable. If all data sources do not have the same number of rows, iteration will stop at the last row of the smallest data source. Available options are:

...

Enable the Show all iterations option to count and show all data source iterations, including those for individually-runnable tests (enabled by default). When this option is disabled, SOAtest does not show all of the data source iterations for individually-runnable tests. In other words, if a test was parameterized on a data source with 50 rows, SOAtest would report that as a single test run. As a result, if there are failures on multiple data source rows, there could be more failures than test runs.

Test Flow Logic

| Anchor | ||||

|---|---|---|---|---|

|

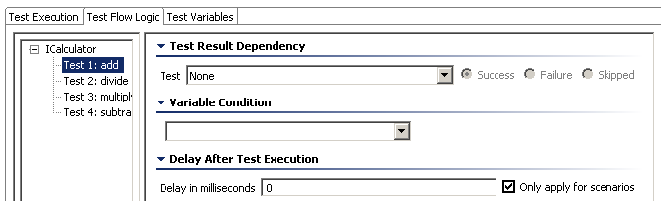

SOAtest allows you to create tests that are dependent on the success or failure of previous tests, setup tests, or tear-down tests. This helps you create an efficient workflow within the test suite. In addition, you can also influence test suite logic by creating while loops and if/else statements that depend on the value of a variable.

Options can be set at the test suite level (options that apply to all tests in the test suite), or for specific tests.

Test Suite Logic Options

In many cases, you may want to have SOAtest repeatedly perform a certain action until a certain condition is met. Test suite flow logic allows you to configure this.

...

- While variable: Repeatedly perform a certain action until a variable condition is met. This requires variables, described in Defining Variables, to be set.

- While pass/fail: Repeatedly perform a certain action until one test (or every test) in the test suite either passes or fails (depending on what you select in the "loop until" settings). Note that if you choose this option (for instance, with it set to loop until one of the tests succeeds) and the overall loop condition is met, then tests that had failures will be marked as a success. If the overall loop condition is not met, then the individual tests that failed will be marked as failed. The console will show which tests passed and which tests failed— regardless of whether or not the loop condition is met.

...

| Info | ||

|---|---|---|

| ||

For a step-by-step demonstration of how to apply while pass/fail test flow logic, see Looping Until a Test Succeeds or Fails - Using Test Flow Logic. |

Test-Specific Logic Options

The following options are available for specific tests:

...

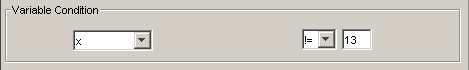

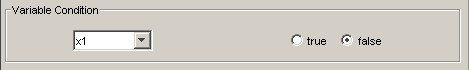

- Variable Condition: Allows you to determine whether or not a test is run depending on variables added to the Variable table (for more information on adding variables, see Defining Variables). If no variables were added, then the Variable Condition options are not available. The following options are available if variables were defined:

- Variable Condition drop-down: Select the desired variable from the drop-down list. The items in this list depend on the variables you added to the Variable table.

- If the variable you select was defined as an integer, a second drop-down menu displays with == (equals), != (not equal), < (less than), > (greater than), <= (less than or equal to), >= (greater than or equal to). In addition, a text field is available to enter an integer. For example:

If x != 13 (x does not equal 13), the test will run, however, if x does equal 13, the test will not be run. - If the variable you select was defined as a boolean value, you will be able to select from either true or false radio buttons. For example:

If variable x1 is false, the test will run, however, if x1 is true, the test will not be run.

- If the variable you select was defined as an integer, a second drop-down menu displays with == (equals), != (not equal), < (less than), > (greater than), <= (less than or equal to), >= (greater than or equal to). In addition, a text field is available to enter an integer. For example:

- Variable Condition drop-down: Select the desired variable from the drop-down list. The items in this list depend on the variables you added to the Variable table.

- Delay in milliseconds: Lets you set a delay before and/or after test execution.

Regression Options

| Anchor | ||||

|---|---|---|---|---|

|

The Regression Options controls options allow you to customize how data sources are used in regression tests and which test suite have regression controls. Note that this tab is not applicable for web scenarios tests. Available options are:

...

- Use data source column names and values: Associates data source column names and values to the data generated by the Diff control. For example, a request that used A=1, B=2 in a SOAP Client will be associated with the Diff control that has been labelled "A=1, B=2" and so on. When this option is selected, you can add and remove data source rows as you wish and the Diff will still map the content to the correct control—as long as the column names and values are unchanged. For more information on using data sources, see Parameterizing Tests with Data Sources, Variables, or Values from Other Tests.

- Regression Controls Logic: This table allows you to configure which tests in a test suite SOAtest should create regression controls for. From each test entered in the table, you can select Always or Never. Regression controls will be updated accordingly the next time you update the regression controls for the test suite.

Defining Variables

| Anchor | ||||

|---|---|---|---|---|

|

The Variables tab allows you to configure variables that can be used to simplify test definition and create flexible, reusable test suites. After a variable is added, tests can parameterize against that variable.

Understanding Variables

You can set a variable to specific value, then use that variable throughout the current test suite to reference that value. This way, you don’t need to enter the same value multiple times—and if you want to modify the value, you only need to change it in one place.

...

Any test variables that are defined in test suite A will be visible to any tests within A, B, or C; variables defined in B will be visible only to B and C.

Adding New Variables

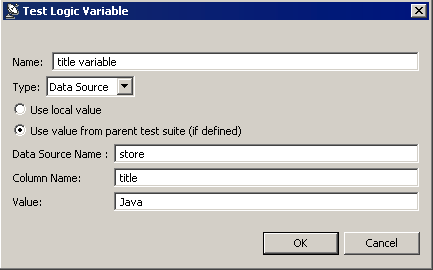

- Click the Add button.

- Enter a new variable name in the Name field.

- Select either Integer, Boolean, String, or Data Source from the Type box.

- Specify whether you want to use a local value or use a value from a parent test suite.

- Use value from parent test suite (if defined) - Choose this option if the current test suite is a "referenced" test suite and you want it to use a value from a data source in the parent test suite. See Using Test Suite References for details on parent test suites.

- Use local value - Choose this option if you always want to use the specified value—even if the current test suite has a parent test suite whose tests set this variable. Note that if you reset the value from a data bank tool or Extension tool, that new value will take precedence over the one specified here.

- (For data source type only) Specify the name of the data source and column where the appropriate variables are stored. The data source should be in the parent test suite (the test suite that references the current test suite).

- Enter the variable value in the Value field. If you chose Use local value, the variable will always be set to the specified value (unless it is reset from a data bank tool or Extension tool). If you chose Use value from parent test suite, the value specified here will be used only if a corresponding value is not found in the parent test suite.

- Click OK.

Using Variables

Once added, variables can be:

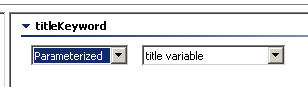

- Used via the "parameterized" option in test fields. For instance, If you wanted to set a SOAP Client request element to use the value from the

title variablevariable, you would config-ure it as follows: - Referenced within text input fields via the {var_name} convention. In the data source editor, you would use the

soa_envprefix to reference environment variables. For example,${soa_env:Variable}/calc_values.xlsx - Reset from a data bank tool (e.g., an XML Data Bank as described in Configuring XML Data Bank Using the Data Source Wizard).

- Reset from an Extension tool (as described below in Setting Variables and Logic Through Scripting).

- Used to define a test logic condition as described below in Test Flow Logic.

Setting Variables and Logic Through Scripting

| Anchor | ||||

|---|---|---|---|---|

|

Very often, test suite logic and variables will depend on responses from the service itself. Using an Extension tool, you can set a variable in order to influence test flow execution. For example, if Test 1 returns a variable of x=3, then Test 2 will run.

...

For instance, you can add an XML Transformer tool to a test and extract a certain value from that test. Then, you can add an Extension output to the XML Transformer and enter a script to get the value from the Transformer. Finally, you can set up a second test to run only if the correct value is returned from the first test.

Monitoring Variable Usage

| Anchor | ||||

|---|---|---|---|---|

|

To configure SOAtest to show what variables are actually used at runtime, set Console preferences (Parasoft> Preferences> Parasoft> Console) to use normal or high verbosity levels.

...

Viewing such variables is useful for diagnosing the cause of any issues that occur.

Tutorial

For a step-by-step demonstration of how to use variables, see Creating Reusable (Modular) Test Suites.

Specifying Client Options

The Client Options tab is divided into the following sections:

General

You can configure the following general test suite options:

- Timeout after (milliseconds): If you do not want to use the default, select Custom from the drop-down menu and enter the desired time. The default value is 30000.

- Outgoing Message Encoding: This option enables you to choose the encoding for outgoing messages for all non-SOAP test clients, providing additional flexibility to set the charset encoding. You can also configure this setting globally in the Misc settings of the Parasoft Preferences (see Additional Preference Settings Settings). You can configure the outgoing message encoding for SOAP clients in the SOAP settings.

- Cookies: Choose Custom from the drop-down menu and enable the Reset existing cookies before sending request to clear the cookies between sessions.

SOAP

You can configure the following SOAP-related test suite options:

- Endpoint: Specifies the endpoint. If you would like to specify an endpoint for all tests within the test suite, enter an endpoint and click the Apply Endpoint to All Tests button.

- Attachment Encapsulation Format: Select Custom from the drop-down menu and select either MIME or DIME, MTOM Always, or MTOM Optional. The default value is MIME.

- Outgoing Message Encoding: Allows you to choose the encoding for outgoing SOAP messages, providing additional flexibility to set the charset encoding. You can also configure this setting globally in the Misc settings of the Parasoft Preferences (see Additional Preference Settings Settings). You can configure the outgoing message encoding for non-SOAP clients in the General settings.

- SOAP Version: Select Custom from the drop-down menu and select either SOAP 1.1 or SOAP 1.2. The default value is SOAP 1.1.

- Constrain to WSDL: Choose Custom from the drop-down menu and enable one or both of the following options:

- Constrain request to WSDL: Require the tool to obtain values from the definition file.

- Constrain SOAP Headers to WSDL: Require the tool to obtain only the SOAP Header values from the definition file.

Specifying Browser Playback Options

The Browser Playback Options tab is divided into several sections:

...

- Authentication: Allows you to specify authentication settings as described below.

Authentication Settings

Basic, NTLM, Digest, and Kerberos authentication schemes are supported, and can be specified in this panel. You can enter a username and password for Basic, NTLM, and Digest authentication, and a service principal for Kerberos authentication.

...